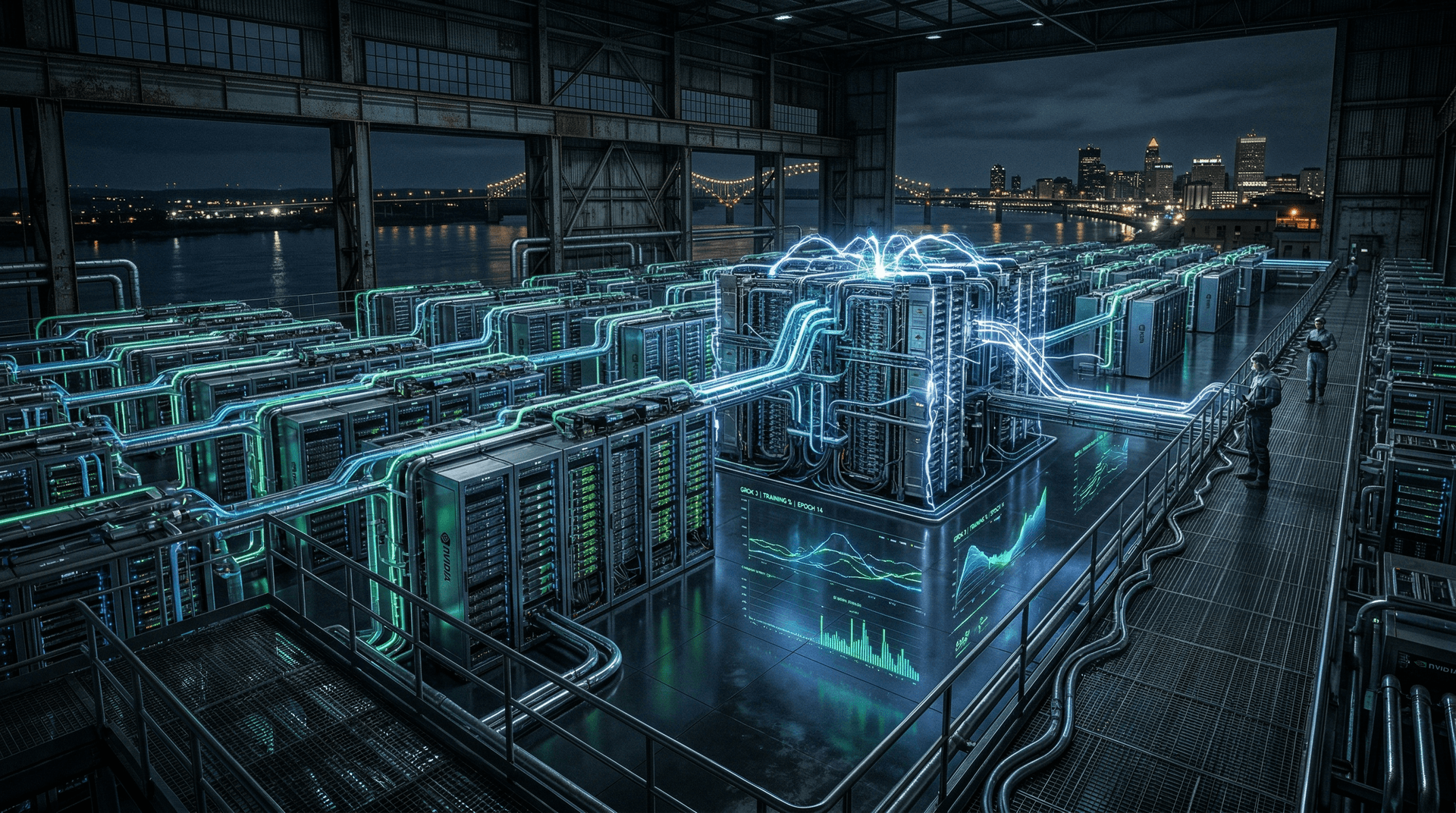

In a bold escalation of the AI arms race, Elon Musk's xAI announced on September 13, 2024, the activation of Colossus, billed as the most powerful AI training system on the planet. Comprising 100,000 liquid-cooled Nvidia H100 GPUs, this Memphis-based supercluster was assembled in a blistering 122 days—a feat that underscores the frenetic pace of AI infrastructure buildouts. For business executives and startup founders in the AI ecosystem, Colossus isn't just a technical milestone; it's a stark signal of the capital-intensive future awaiting frontier AI development.

The Birth of Colossus: Speed and Scale

xAI, founded in July 2023 by Musk and a team of ex-DeepMind, OpenAI, and Tesla engineers, has positioned itself as a 'maximally truth-seeking' AI challenger. After raising $6 billion in Series B funding in May 2024—valuing the startup at $24 billion post-money—the company pivoted to hardware supremacy. Colossus represents the culmination of that capital deployment, with GPUs sourced amid global shortages and installed in a former Electrolux factory in Memphis, Tennessee.

Musk tweeted: "Colossus 100k H100 cluster is now training Grok 3. Never a dull moment." The cluster's liquid-cooling system enables unprecedented density, minimizing thermal throttling and maximizing flops (floating-point operations per second). At peak, it delivers exaflop-scale compute, dwarfing public benchmarks like Frontier (1.2 exaflops) for AI-specific workloads.

Construction timelines are eye-popping: Partnering with NVIDIA, Dell, and Supermicro, xAI transformed a vacant factory into a hyperscale data center faster than hyperscalers like AWS or Azure typically manage expansions. Musk hinted at expansions: "Next gen... ~300k H100 equivalent GPUs online by end of year, then >>1M H100 equiv soon after."

Business Implications: A Compute Arms Race

For executives, Colossus highlights the AI infrastructure moat. Training state-of-the-art models like Grok 3—targeted for December 2024 release—requires not just GPUs but power (estimated 150MW+), cooling, and networking fabric (NVIDIA InfiniBand). Cost? Roughly $3-4 billion for GPUs alone at $30,000-$40,000 per H100, plus $1B+ in ancillary infrastructure. xAI's war chest covers this, but smaller startups face a grim reality.

| Metric | Colossus (xAI) | Comparison | |--------|----------------|------------| | GPUs | 100,000 H100s | Meta: 24k H100s (growing to 350k); OpenAI/MSFT: ~10k+ Stargate planned | | Build Time | 122 days | Typical: 18-24 months | | Power Draw | ~150MW | Equivalent to small city | | Purpose | Grok 3 training | Frontier LLMs |

This scale tilts the field toward well-funded incumbents. Startups pivoting to open-weight models (e.g., Llama 3.1) or fine-tuning can compete, but pre-training from scratch demands nine-figure investments. VCs like a16z and Sequoia are doubling down on 'AI infra' bets, with funds earmarked for clusters.

Startup Ecosystem Shakeup

In the startup world, Colossus amplifies trends:

1. Inference Over Training: Players like Groq (LPUs), Together AI, and Fireworks.ai thrive by optimizing inference on rented compute. xAI's focus on training leaves room for these 'picks and shovels' firms.

2. Vertical AI Applications: With frontier models commoditizing, startups like Harvey (legal AI, $100M raised Sept 2024) or Perplexity (search) layer apps on APIs from xAI, OpenAI, or Anthropic.

3. Compute Democratization? Musk claims Colossus will enable 'truth-seeking AI' accessible via xAI's API. Early Grok access via X Premium hints at freemium models, but enterprise tiers could mirror OpenAI's $20/user ChatGPT Team.

Funding flows: Post-Colossus buzz, AI infra startups like Crusoe Energy (clean compute) and Lambda Labs saw valuation pops. However, energy constraints loom—U.S. grid upgrades lag, pushing deals with nuclear firms like Kairos Power (Google's SMR partner).

Competitive Landscape and Nvidia's Dominion

Colossus laps rivals:

- Meta: 24k H100s now, planning 600k total GPUs.

- OpenAI/Microsoft: Stargate (5M GPUs by 2028) in pipeline, but ramping slower.

- Google/Anthropic: TPU v5p clusters competitive but proprietary.

NVIDIA reaps windfalls—H100 demand propelled its market cap past $3 trillion in June 2024. xAI's order underscores Blackwell (B200) hype; Musk flagged upgrades. Suppliers Dell/Supermicro stock jumped 5-10% post-announcement.

Risks? Geopolitics (Taiwan chip reliance), power shortages, and talent wars. xAI poached engineers aggressively, offering equity in a $24B entity.

Executive Playbook: Navigating the New Era

C-suite leaders should:

- Audit Compute Spend: Shift to spot markets (CoreWeave, Lambda) for flexibility.

- Partner Strategically: xAI's API could undercut OpenAI pricing; beta test now.

- Talent Acquisition: Offer RSUs tied to AI outcomes.

- Sustainability Focus: Liquid cooling cuts PUE to 1.1; prioritize green infra.

Sam Altman (OpenAI) noted the 'compute bottleneck' in July 2024 Senate testimony; Colossus proves it. As Grok 3 eyes multimodality and reasoning (rivaling OpenAI's o1 from Sept 12), benchmarks will dictate winners.

The Road Ahead

Colossus cements xAI as a top contender, blending Musk's bravado with execution. For the startup ecosystem, it's a call to specialize: Build on giants' shoulders, not race to match their shoulders. By December, Grok 3's performance could redefine enterprise AI adoption, pressuring laggards.

In AI's gold rush, compute is the new oil. xAI just struck the biggest gusher yet.

Top Shelf News analysis based on public announcements as of September 18, 2024.